Introduction to Automated Data Processing

Automated data processing refers to the use of computer systems and software to handle large volumes of data efficiently. This approach minimizes human intervention, ensuring faster and more accurate data management. In today’s digital era, businesses generate massive amounts of data daily. Managing this data manually is impractical, leading to the adoption of automated systems. These systems streamline operations, enhance accuracy, and save time, making them indispensable in various industries.

With the rise of big data, the need for automated data processing has become even more critical. Organizations leverage these systems to process and analyze data quickly, leading to better decision-making and improved operational efficiency. From financial institutions to healthcare providers, automated data processing plays a pivotal role in enhancing performance and achieving strategic goals.

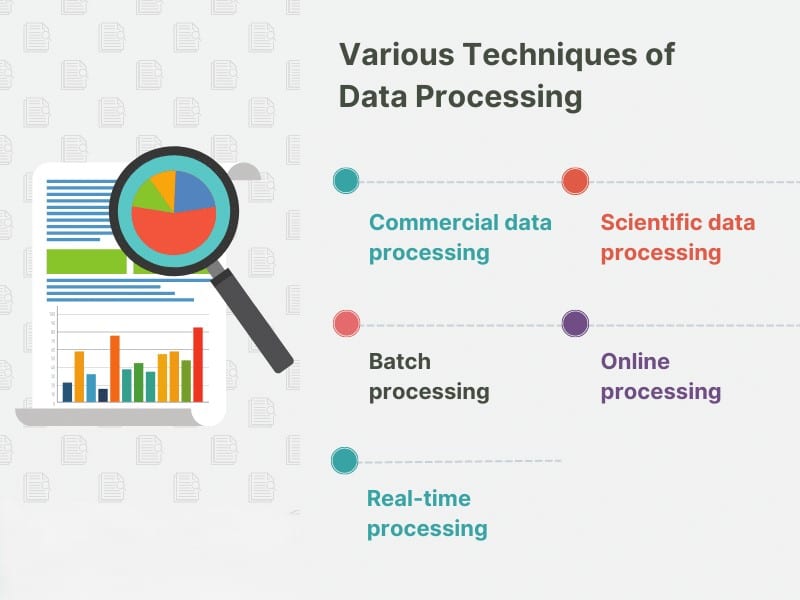

Key Data Processing Techniques

Data processing techniques are essential for transforming raw data into meaningful information. These techniques include data collection, data cleaning, data transformation, and data analysis. Each step is crucial in ensuring the accuracy and reliability of the processed data.

- Data Collection: The first step in data processing is gathering data from various sources. This can include databases, online forms, sensors, and more. Automated systems streamline this process by integrating with different data sources, ensuring comprehensive data collection.

- Data Cleaning: Raw data often contains errors, duplicates, and inconsistencies. Data cleaning involves identifying and correcting these issues to ensure the data is accurate and reliable. Automated tools can efficiently handle this task, reducing the time and effort required for manual cleaning.

- Data Transformation: Once the data is cleaned, it needs to be transformed into a suitable format for analysis. This can involve normalizing data, aggregating data, and applying various transformations to prepare it for the next steps.

- Data Analysis: The final step is analyzing the data to extract meaningful insights. Automated data processing systems use advanced algorithms and machine learning techniques to analyze large datasets quickly and accurately.

Essential Data Processing Tools

Various tools are available to facilitate automated data processing. These tools range from software applications to comprehensive platforms designed to handle different aspects of data processing.

- Apache Hadoop: A framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models.

- Apache Spark: An open-source unified analytics engine for big data processing, with built-in modules for streaming, SQL, machine learning, and graph processing.

- Talend: Provides an open-source data integration platform that allows users to connect, transform, and manage data across various sources.

- RapidMiner: A data science platform that provides an integrated environment for data preparation, machine learning, deep learning, text mining, and predictive analytics.

- KNIME: An open-source software for creating data science applications and services, used to analyze and interpret data.

Using these tools, businesses can automate various aspects of data processing, from data collection to analysis, ensuring efficient and accurate data management.

Benefits of Automated Data Processing

Automated data processing offers numerous benefits, making it a valuable asset for organizations across different industries.

- Efficiency: Automated systems can process large volumes of data much faster than manual methods. This efficiency allows businesses to handle more data in less time, improving overall productivity.

- Accuracy: By minimizing human intervention, automated data processing reduces the risk of errors. This accuracy ensures that the data is reliable and trustworthy.

- Cost Savings: Automation reduces the need for manual labor, leading to significant cost savings. Organizations can allocate resources more effectively, focusing on strategic initiatives rather than routine data processing tasks.

- Scalability: Automated data processing systems can easily scale to handle growing data volumes. As businesses expand, these systems can accommodate increased data loads without compromising performance.

- Real-Time Processing: Many automated data processing systems offer real-time processing capabilities. This enables organizations to access up-to-date information and make timely decisions.

Efficient Data Processing Methods

Efficient data processing methods are crucial for maximizing the benefits of automation. These methods include batch processing, real-time processing, and parallel processing.

- Batch Processing: Involves processing data in large batches at scheduled intervals. This method is suitable for tasks that do not require immediate results, such as generating reports.

- Real-Time Processing: Involves processing data as it is generated. This method is ideal for applications that require immediate feedback, such as monitoring systems and online transactions.

- Parallel Processing: Involves dividing large data sets into smaller chunks and processing them simultaneously. This method leverages the power of multiple processors to speed up data processing.

Data Processing Tools and Automated Data Processing

Efficient data processing relies on a variety of tools designed to streamline and automate the process. These tools help manage and manipulate data effectively, ensuring that organizations can extract valuable insights promptly. Some common data processing tools include:

- Apache Hadoop: An open-source framework that allows for the distributed processing of large data sets across clusters of computers.

- Apache Spark: A fast and general-purpose cluster computing system that provides in-memory data processing capabilities.

- Microsoft Azure Data Factory: A cloud-based data integration service that allows you to create, schedule, and manage data pipelines.

- Amazon Web Services (AWS) Glue: A fully managed extract, transform, and load (ETL) service that makes it easy to prepare and load data for analytics.

- Google Cloud Dataflow: A fully managed service for executing Apache Beam pipelines for stream and batch processing.

Automated data processing refers to the use of software and algorithms to perform tasks that would otherwise require manual intervention. This approach reduces the risk of human error, increases efficiency, and allows for faster decision-making. Automated data processing can include:

- Data Ingestion: Automatically collecting and importing data from various sources into a centralized repository.

- Data Transformation: Automatically converting raw data into a format that is suitable for analysis.

- Data Analysis: Automatically analyzing data to identify patterns, trends, and anomalies.

- Data Visualization: Automatically creating visual representations of data to aid in understanding and decision-making.

By leveraging these tools and techniques, organizations can streamline their data processing workflows and extract maximum value from their data.

Real-World Applications and Examples

Automated data processing has numerous real-world applications across various industries. Here are some examples:

- E-commerce Product Recommendations: Automated data processing systems analyze customer behavior and preferences to generate personalized product recommendations. This enhances the customer experience and drives sales.

- Fraud Detection in Banking: Banks use automated data processing to analyze transactions and identify fraudulent activities. By processing data in real-time, these systems can detect and prevent fraud before it impacts customers.

- Health Monitoring: Healthcare providers use automated data processing to monitor patient health in real time. This enables timely interventions and improves patient outcomes.

- Smart Cities: Automated data processing systems are used in smart cities to manage traffic, monitor air quality, and optimize energy consumption. These systems enhance urban living by improving efficiency and sustainability.

Data Processing Requirements

For effective automated data processing, organizations must fulfill specific requirements. These include ensuring data quality by collecting accurate, complete, and reliable data. High-quality data is crucial for generating meaningful insights. Additionally, organizations need to implement robust data security measures, including encryption, access controls, and regular security audits, to protect data from unauthorized access and breaches.

Furthermore, organizations must focus on data integration to create a unified view by integrating data from various sources. This requires compatibility between different systems and seamless data flow. They also need to choose the right storage solutions, such as cloud storage, data warehouses, and databases, to accommodate large volumes of data. Lastly, compliance with legal and regulatory requirements related to data processing, including data privacy laws, industry standards, and organizational policies, is essential.

- Data Quality: Ensure data is accurate, complete, and reliable.

- Data Security: Implement encryption, access controls, and security audits.

- Data Integration: Integrate data from various sources for a unified view.

- Data Storage: Choose appropriate storage solutions for large volumes of data.

- Compliance: Adhere to legal and regulatory requirements for data processing.

Challenges in Automated Data Processing

Despite its benefits, automated data processing comes with its own set of challenges. These include:

- Data Privacy: Ensuring that personal and sensitive data is protected from unauthorized access and misuse.

- Data Complexity: Handling complex and unstructured data can be challenging, requiring advanced tools and techniques.

- Scalability Issues: Scaling automated data processing systems to accommodate growing data volumes can be difficult.

- Integration Challenges: Integrating data from different sources and formats can be complex and time-consuming.

- Cost: Implementing and maintaining automated data processing systems can be expensive, requiring significant investment.

Future Trends in Automated Data Processing

The future of automated data processing looks promising, with several emerging trends set to shape the industry.

- AI and Machine Learning Integration: The integration of AI and machine learning with automated data processing will enhance data analysis capabilities, enabling more accurate predictions and insights.

- Big Data Analytics: The growing volume of data will drive the demand for advanced analytics tools and techniques to process and analyze big data.

- Data Science Automation: Automation in data science will streamline workflows and reduce the time required for data analysis.

- Data Ethics: The increasing importance of data ethics will lead to the development of frameworks and guidelines to ensure ethical data processing practices.

Conclusion

Automated data processing is a vital component of modern data management. It offers numerous benefits, including efficiency, accuracy, and cost savings. By adopting advanced data processing techniques and tools, organizations can enhance their data processing capabilities and achieve better outcomes. However, it is essential to address the challenges associated with automated data processing to fully realize its potential.

As technology continues to evolve, the future of automated data processing looks bright. With the integration of AI and machine learning, businesses can expect even more advanced data processing solutions that will drive innovation and growth.

What are the primary benefits of automated data processing?

Automated data processing offers several benefits, including increased efficiency, improved accuracy, cost savings, scalability, and real-time processing capabilities. By minimizing human intervention, organizations can handle large volumes of data quickly and accurately, leading to better decision-making and enhanced operational performance.

What tools are commonly used for automated data processing?

Common tools for automated data processing include programming languages like Python and R, frameworks such as Apache Hadoop and Spark, and data integration platforms like Talend and KNIME. These tools help streamline data collection, cleaning, transformation, and analysis processes.

How does automated data processing impact data security?

Automated data processing can enhance data security by implementing robust encryption methods, access controls, and regular security audits. However, it is crucial to ensure that these systems are properly configured and maintained to prevent unauthorized access and data breaches.